In a watershed moment for the healthcare industry, more than 2,400 mental health professionals in Northern California have left their posts, sparking the Kaiser Permanente strike 2026. Therapists, psychologists, and clinical social workers are raising the alarm over what they describe as an unsustainable environment characterized by grueling back-to-back scheduling and the rapid, unchecked integration of artificial intelligence into patient care. This walkout has thrust the growing AI therapy controversy into the national spotlight, prompting urgent questions about patient privacy, the erosion of personalized medicine, and the future of human connection in mental health treatment.

The Breaking Point: Burnout and Ambient AI Technology

The immediate catalyst for the strike involves chronic mental health worker burnout compounded by recent technological shifts. Kaiser Permanente recently introduced AI-powered "ambient listening" software, specifically utilizing platforms like Abridge, designed to record patient visits and automatically generate clinical notes. Healthcare administrators argue this technology reduces administrative strain, freeing providers from hours of tedious documentation.

However, the clinicians on the picket lines see a different reality. Union representatives argue that while reducing paperwork is a valid goal, deploying ambient AI acts as a trojan horse. They fear administrators will leverage the supposed efficiency of these tools to justify severe understaffing and demand even higher daily patient loads. For providers already exhausted by unrelenting schedules, the prospect of squeezing more appointments into a single shift threatens both their own well-being and the quality of care they can deliver.

Patient Privacy and the Scripted Experience

Patients are also feeling the shift. Recent reports from individuals receiving telehealth services indicate a noticeable change in the therapeutic dynamic, with some describing their providers as seeming to read from AI-generated scripts. The introduction of third-party listening tools inherently raises red flags surrounding patient data and HIPAA compliance, fueling the debate over digital mental health ethics. If large language models are processing deeply personal trauma and mental health histories, the potential for data misuse or unauthorized sharing remains a critical blind spot.

APA and FTC Crack Down on Digital Mental Health

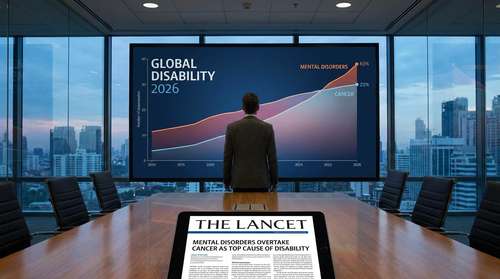

The Kaiser Permanente walkout is not an isolated incident; it represents a microcosm of a much larger battle over mental health AI regulations. The technological gold rush to automate healthcare has caught the attention of federal regulators and leading psychological institutions alike.

Recently, the American Psychological Association spearheaded an aggressive push for oversight, culminating in the APA FTC investigation AI initiative. Following tragic incidents where vulnerable teenagers interacted with unregulated AI chatbot companions—some of which falsely presented themselves as licensed therapists on platforms like Character.AI—the APA urged the Federal Trade Commission to intervene. In response, the commission initiated a comprehensive inquiry targeting several major technology companies under Section 6(b) of the FTC Act. The FTC is demanding detailed disclosures regarding how these consumer-facing chatbots are tested for safety, how user data is monetized, and what specific mitigations are in place to prevent negative impacts on minors.

Dr. Vaile Wright, the APA's senior director of health care innovation, has been vocal about the dangers of algorithms operating without a license. When an AI tool hallucinates or offers inappropriate coping mechanisms to a patient in crisis, the liability and the human cost are equally devastating. This regulatory crackdown underscores the precise fears motivating the striking Kaiser employees: technology is moving faster than the guardrails necessary to protect patients.

The Core Debate: Human-Led Therapy vs AI

At the heart of this labor dispute is the fundamental nature of psychological healing. The human-led therapy vs AI debate hinges on whether an algorithm can replicate the nuanced rupture-and-repair dynamics that form the foundation of effective counseling. Therapy requires emotional intelligence, the ability to read unspoken physical cues, and the establishment of long-term relational trust.

Artificial intelligence, despite its impressive linguistic capabilities, operates on pattern recognition rather than empathy. Striking professionals argue that blanketing mental healthcare with AI adoption compromises patient outcomes. When a patient is experiencing severe depression or acute anxiety, a synthesized response cannot replace the localized, deeply human intuition of a trained clinical social worker or psychologist.

What This Means for the Future of Healthcare

As the strike continues across Northern California, the stakes extend far beyond the bargaining table. The outcome of these negotiations will likely set a massive precedent for how major medical systems nationwide negotiate the integration of artificial intelligence. Hospital administrators are watching closely. If Kaiser Permanente successfully standardizes ambient listening tools and AI-assisted charting without compromising union demands for safe staffing ratios, it could create a blueprint for hybrid care models. Conversely, if the union secures strict limitations on AI deployment, it may slow the technological rollout across the entire sector.

For now, 2,400 professionals stand firm outside medical centers, holding the line for a model of care that values the human mind over the machine. The resolution of this standoff will signal to patients and providers alike whether the future of mental health will be guided by clinical expertise or algorithmic efficiency.